I recently decided to "vibe-code" my first two apps. I wanted to see what the hype was about, but I’ll be honest right out of the gate: I’m not a fan.

The problem with vibe-coding is that you end up building things you don’t actually understand. You aren't learning the ins and outs of the system, and eventually, that’s going to catch up with you. I see it all the time in college—peers using LLMs to breeze through homework and projects without any actual learning happening.

If I let an AI build everything and then deploy it, what happens when the AI hits a wall? I’d have to spend an eternity trying to decipher a codebase I didn't write, assuming I even have the skills to fix it.

The Experiment

I saw a video about Cloudflare using an AI model to completely rewrite Next.js, so I figured I’d give it a shot. I vibe-coded two things:

- A Chrome Extension: A peer-to-peer web conferencing tool with a signaling server and authentication.

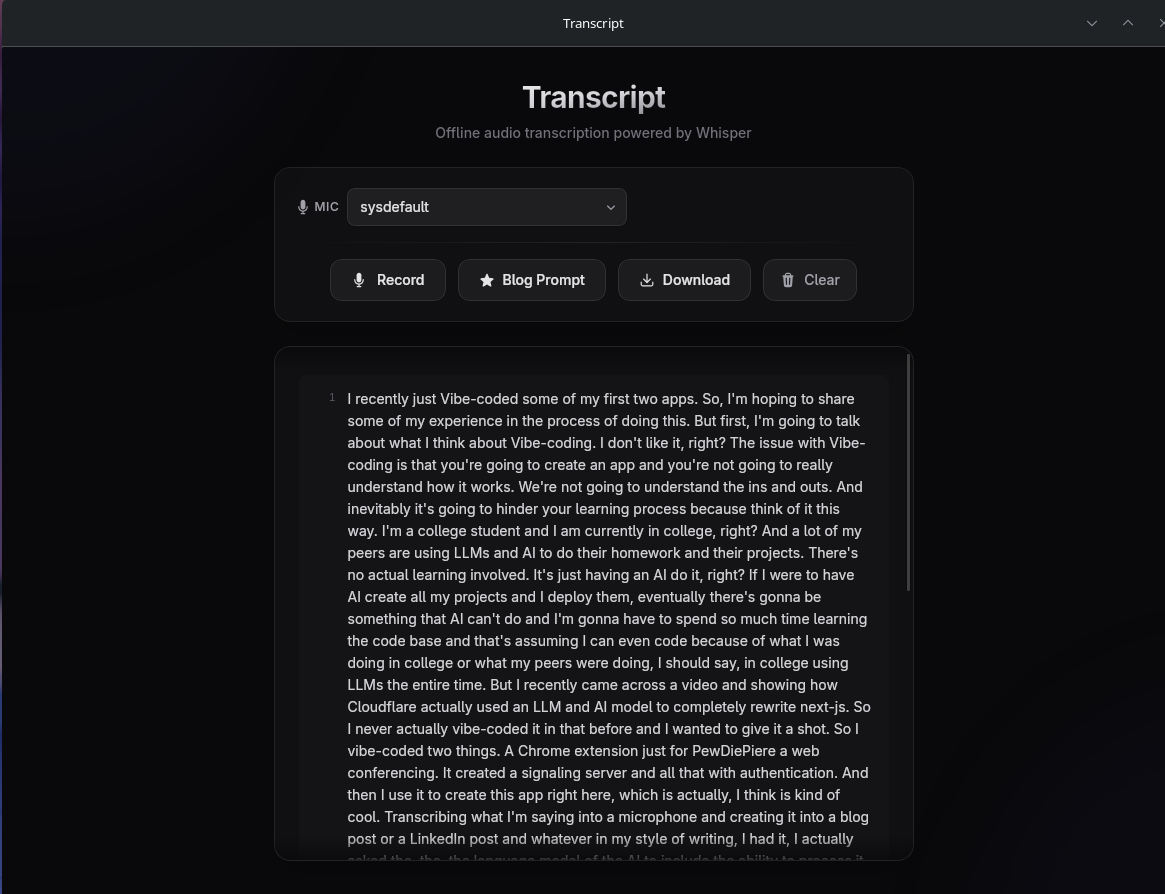

- A Transcription App: The very app I’m using to write this. It transcribes audio and turns it into blog or LinkedIn posts. I even had the AI build a way to process it locally by downloading a small LLM into the browser.

The Security Reality Check

Functionally, the apps worked pretty well. But from a security standpoint? It was a disaster.

When I looked at the authentication the AI generated for the Chrome extension, there was a glaring vulnerability: it actually exposed the user password through an API call. That should be Security 101, yet the AI missed it entirely.

The transcription app was better, mostly because it runs entirely offline and locally, so there’s less of an attack surface. But that one security flaw in the first app confirmed my fears.

The Bottom Line

Vibe-coding is a neat trick for people who don't know how to program, but if you’re actually trying to be a programmer (or an accountant, or anything else), learn your craft. Don’t just have the AI do your work for you.

Whether you’re in cybersecurity or software engineering, relying on AI is just going to hinder your experience in the long run. I actually wrote a paper on this for school, and my hands-on experiment only proved the point further.

I’ve since uploaded the code for these experiments to my GitHub. I also went in and made some manual changes and fixes to the generated code to get it where it needed to be. You can check out the repositories here.

Behind the Scenes

I recently just Vibe-coded some of my first two apps. So, I'm hoping to share some of my experience in the process of doing this. But first, I'm going to talk about what I think about Vibe-coding. I don't like it, right? The issue with Vibe-coding is that you're going to create an app and you're not going to really understand how it works. We're not going to understand the ins and outs. And inevitably it's going to hinder your learning process because think of it this way. I'm a college student and I am currently in college, right? And a lot of my peers are using LLMs and AI to do their homework and their projects. There's no actual learning involved. It's just having an AI do it, right? If I were to have AI create all my projects and I deploy them, eventually there's gonna be something that AI can't do and I'm gonna have to spend so much time learning the code base and that's assuming I can even code because of what I was doing in college or what my peers were doing, I should say, in college using LLMs the entire time. But I recently came across a video and showing how Cloudflare actually used an LLM and AI model to completely rewrite next-js. So I never actually vibe-coded it in that before and I wanted to give it a shot. So I vibe-coded two things. A Chrome extension just for PewDiePiere a web conferencing. It created a signaling server and all that with authentication. And then I use it to create this app right here, which is actually, I think is kind of cool. Transcribing what I'm saying into a microphone and creating it into a blog post or a LinkedIn post and whatever in my style of writing, I had it, I actually asked the, the, the language model of the AI to include the ability to process it locally, including downloading a very small LLM into the browser and creating it there, where you can just copy a prompt and send it to your favorite AI agent